Mask Sensitive LLM Prompts and Completions

Use Pipelines on your LLM Route to avoid sending sensitive user or document text downstream in plain text, while keeping the fields you need for debugging, governance, and cost monitoring.

Many LLM-related fields can hold user content or retrieved material:

- Prompt and completion text

- Chat message content (user and assistant messages)

- Retrieved document or context content

Prerequisites

- LLM telemetry flowing into Cribl Stream on a Route you can edit.

- An inventory of which attributes may contain prompts, completions, or retrieved documents.

For generic field naming patterns, see LLM Telemetry Use Cases in Cribl.

Redact Sensitive Attributes

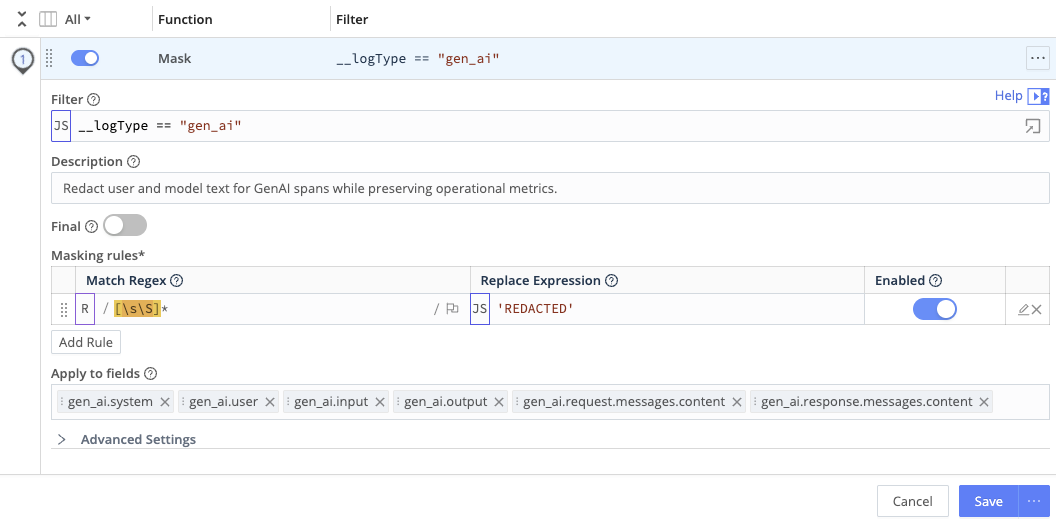

Add Mask Functions (with filters scoped to LLM spans or events) so prompts, completions, and retrieved text are redacted before data leaves Stream.

- On the Route that handles your LLM spans, attach a Pipeline.

- Add one or more Mask Functions to redact or obfuscate attributes that may contain sensitive content. Scope each Function with a filter so it runs only on LLM-related spans or events, using the classification fields your telemetry provides. Alternatively, you can use a Guard Function. You might:

- Replace content with a fixed token (for example,

REDACTED). - Hash or tokenize content so you can correlate repeated values without exposing raw text.

- Replace content with a fixed token (for example,

- Keep non-sensitive operational fields where policy allows, such as:

- Model or system identifiers (for example, model name or family).

- Token usage (total, prompt, completion).

- Cost fields, if present.

- High-level metadata (for example, user or tenant identifiers, session IDs, tags).