Route LLM Telemetry to Multiple Destinations

Use Routes in Cribl Stream so LLM telemetry reaches the backends you rely on for observability, security, and analytics–for example a primary APM or tracing tool, a cost-analytics index, a data lake, or Cribl Search.

After LLM data enters Stream, define a Route that matches LLM traffic, then send matching events to one or more Destinations. You can clone the same spans to multiple outputs when teams need different views of the same traffic.

Route LLM Traffic in Stream

Create a Route that identifies LLM-related events from your OTel Source (or other inputs), then send or clone them to the Destinations each team needs.

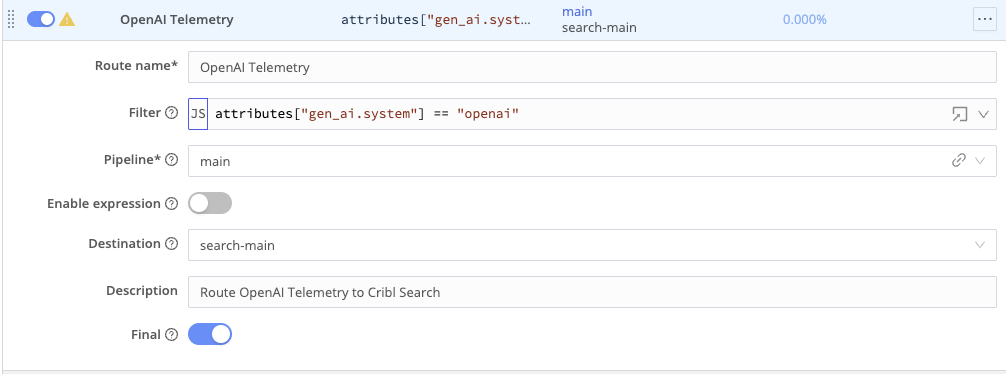

- In Routing > Data Routes, add a Route that matches your LLM traffic. For example:

- Match events from your OpenTelemetry Source by Source input ID (for example, an expression like

__inputId == 'otel_llm_in'or your actual input ID). - Narrow to your LLM app or service using your service name field (for example,

service.name == "my-llm-application"). - Limit to spans that represent LLM or related workflow operations using whatever span kind, category, or component type fields your instrumentation exposes for model calls, retrieval, tools, agents, and similar work.

- Match events from your OpenTelemetry Source by Source input ID (for example, an expression like

- Combine any fields your instrumentation provides to define “LLM traffic” for your environment.

- In Send to:

- Choose your primary observability backend as the main Destination for full traces.

- Optionally clone the same spans to other Destinations (for example, a cost analytics index, data lake, or developer sandbox).

Prerequisites

- LLM-related telemetry flowing into Cribl Stream.

- At least one Destination configured for downstream analysis.

- Familiarity with your schema (for example, fields for service name, span type, and environment). For typical field names, see LLM Telemetry Use Cases in Cribl.

Related Topics

- LLM Telemetry Use Cases in Cribl – shared prerequisites and field mapping reference

- Mask sensitive LLM prompts and completions

- Emit LLM cost and usage metrics from token counts

- Explore LLM telemetry in Cribl Search