Organize Data with Dataset Rules

Once you’ve created your Search Datasets, set Dataset rules to control which events land in which Dataset.

Highlights

- Each Dataset rule routes matching events to a specified Search Dataset.

- Each Search Dataset has its own retention period of 1 day to 10 years.

- Verify Dataset assignment to make sure events land where you expect.

Dataset Rules Overview

Each Dataset rule captures events that match a KQL expression, and then sends those events to the specified Search Dataset.

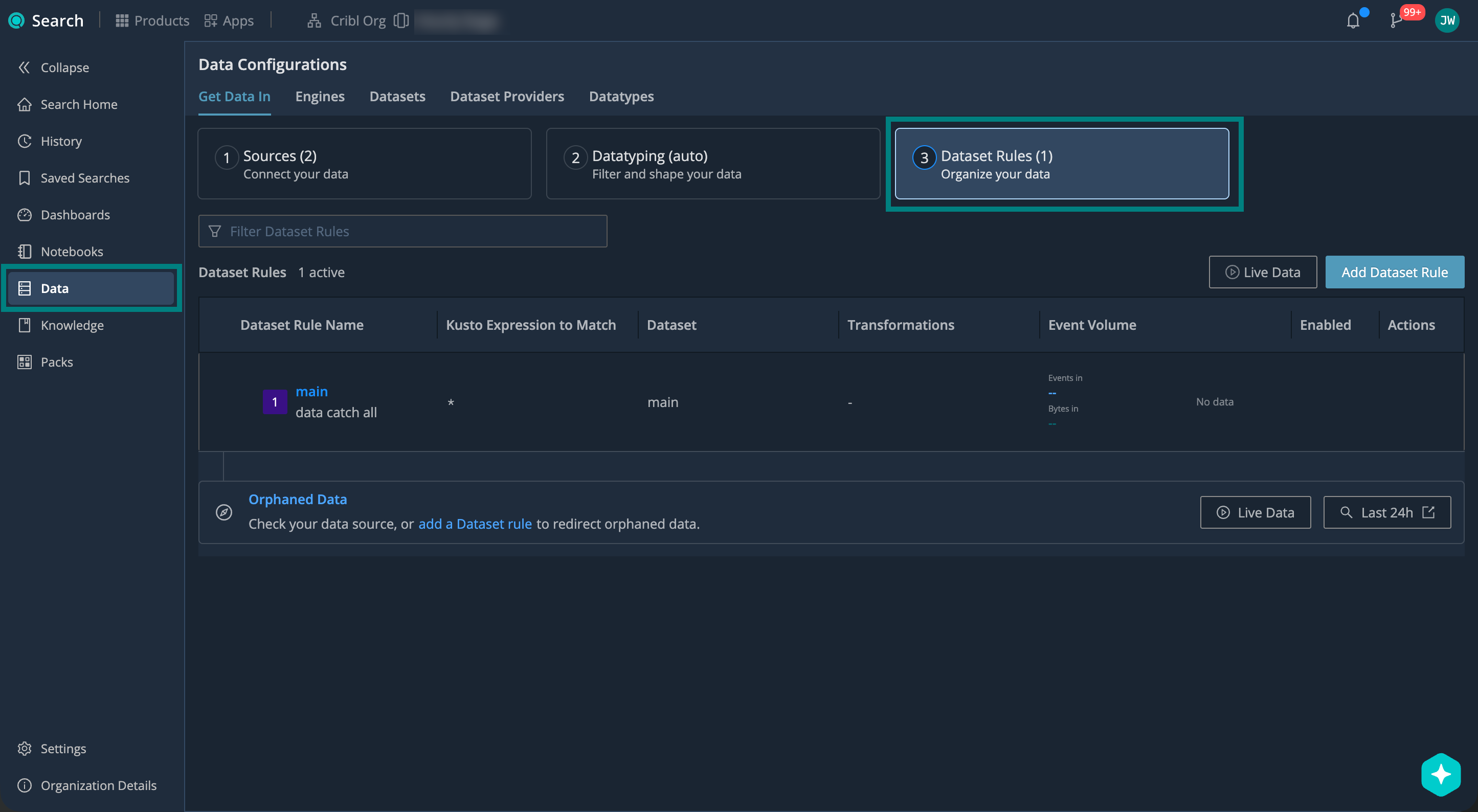

To manage your Dataset rules: from the Cribl.Cloud top bar, select Products > Search > Data > Get Data In > 3. Dataset Rules.

Add Dataset Rules

To add a new Dataset rule:

- On the Cribl.Cloud top bar, select Products > Search > Data > Get Data In > 3. Dataset Rules.

- Select Add Dataset Rule. Name and describe your rule.

- In Kusto expression to match, enter a KQL expression that matches events you want to route.

See Dataset Rule Expressions for syntax and examples.

- In Send data to, choose your target Search Dataset. This is where events matching the KQL expression will land.

You can also select Drop to instead discard the events.

- In Modify fields, you can perform Dataset-specific enrichment or normalization. See Modify Fields in Search Datasets.

- Make sure that Enabled in the top right corner is checked, and confirm with Add.

If you add more rules, drag them to change the order. Rules run top-down, and the first match wins. Put more specific rules above broader ones.

Events that don’t match any rule, or match a rule pointing to a deleted Dataset, fall back to the

main Dataset.

Dataset Rule Expressions

Point your Dataset KQL expressions at these fields:

| Field | Description |

|---|---|

datatype | Datatype assigned through Datatyping. |

__inputId | Source identifier in type:id format.Supported types: cribl_http, datadog_agent, elastic, http_raw, open_telemetry, prometheus_rw, splunk, splunk_hec, syslog, tcp, tcpjson, wef, wiz_webhook.Example: syslog:my_source_id. |

You can also filter by any other field in your parsed data.

Same as with Datatype rule expressions, you can:

- Create KQL expressions that evaluate to

true/falsefor matching events. - Set case-insensitive conditions using

=and wildcards (*). - Pipe into

| where ...,| find ..., or| search ...for richer logic.

But:

- You can’t use expressions that aggregate or reshape data (such as

statsorproject). - You can’t use

letorsetstatements.

See the examples below. For full reference on the Cribl Search implementation of KQL, see Language Reference.

Matches all events of Datatype apache_httpd_accesslog_common:

datatype = "apache_httpd_accesslog_common"Matches RFC 3164 syslog events from the my_source_id Source only. You can use rules like this to separate syslog from

one Source into its own Dataset:

datatype = "syslog_rfc3164" and __inputId = "syslog:my_source_id"Matches AWS VPC Flow Logs v2 events where the parsed host field equals vpc-flow-logs:

datatype = "aws_vpc_v2" | where host = "vpc-flow-logs"Matches all events from an OpenTelemetry Source with ID otel. You can use rules like this when you want one Dataset

per Source:

__inputId = "open_telemetry:otel"Matches healthcheck and heartbeat events, using custom Datatypes. You can route them to Drop to save storage:

datatype in ("healthcheck", "heartbeat")Verify Dataset Assignment

Check on your Search Datasets to make sure your events are routed and retained as expected.

- Go to Search Home: On the Cribl.Cloud top bar, select Products > Search.

- Under Available Datasets, select a Search Dataset you want to inspect.

Search Datasets are marked with the lakehouse icon

.

. - In the resulting details panel, look at the Fields section.

If the Fields section is empty, select Retry to load the metadata.

If there’s no Fields section at all, you’re looking at a federated Dataset. Select a Search Dataset instead.

- Verify that the Dataset contains the fields you’d expect from your Datatyping configuration and Dataset rules.

For more information, see Explore Fields in Search Datasets.

For more ways to explore your Datasets, see Inspect Your Datasets.

Modify Fields in Dataset Rules

You can enrich or normalize events on their way into a specific Search Dataset, without affecting your upstream Datatyping rules. Use this to normalize timestamps, convert units, or reshape values, while preserving the Auto-Datatyping flow.

- On the Cribl.Cloud top bar, select Products > Search > Data > Get Data In > 3. Dataset Rules.

- Select Add Dataset Rule (or edit an existing one). For details, see Add Dataset Rules.

- Select Modify fields.

- In the text box, write a KQL

extendexpression to add or overwrite fields on matched events.

See the examples below. For full reference on the Cribl Search implementation of KQL, see Language Reference.

Offset _time based on the host field so that events from different regions align to the same timezone:

_time = case(host startswith "eastcoast", _time + 3h, host startswith "westcoast", _time + 6h, _time)Convert a bytes field to megabytes and store the result in a new field:

size_mb = bytes / 1048576.0Map verbose severity levels to a simplified priority field:

priority = case(level == "CRITICAL" or level == "FATAL", "high", level == "ERROR" or level == "WARN", "medium", "low")Override Dataset Rules

To bypass Dataset rules, add a dataset field to your events before they reach Cribl Search. You can add this and other

override fields in Cribl Stream, or in any upstream sender.

| Field | What Cribl Search Does |

|---|---|

dataset | Skips Dataset rules and routes directly to the specified Dataset. If the Dataset doesn’t exist, routes to main with _dataset_reason = "does not exist". |

Next Steps

Now that your data is organized into Datasets, you can start putting it to work. For example: